The AI Skills Gap That Junior Auditors, and Their Firms, Can No Longer Afford to Ignore

AI is removing the repetitive fieldwork that trained junior auditors. Here's what the skills gap looks like - and how firms and trainees can close it.

Speak to One of our Learning Consultants Today

Talk to SalesAccording to the Journal of Accountancy, AI-assisted audit tools are delivering 20–30% reductions in fieldwork time. CPA Trendlines puts document review acceleration at 69% for firms deploying AI at scale. These are not projections. They are current results, reported by practitioners who have already made the switch.

The efficiency gains are real, and they are genuinely good for audit quality. Faster cycle times, broader transaction coverage, fewer manual errors - the case for AI adoption in audit is not in dispute.

But there is a secondary effect that the industry has been slower to name. The tasks being compressed (transaction testing, document sampling, balance sheet reconciliation, confirmation chasing) were not just low-value outputs to be optimized away. They were the mechanism by which junior auditors built judgment, pattern recognition, and professional instinct. They were, in the most literal sense, how the work trained the people doing it.

When those tasks shrink, the productivity gain is captured immediately. The development cost shows up later - in cohorts that are technically credentialled but experientially thin, in managers carrying a heavier review burden, in client-facing moments where junior staff are expected to contribute more than their preparation supports.

The question no one is asking loudly enough: if those tasks are gone, where does that development happen now?

What Junior Auditors Actually Learned From Repetitive Fieldwork

To understand what is being lost, it helps to be specific about what repetitive audit fieldwork actually taught. Not the tasks themselves, but the competencies that accumulated through doing them.

Ticking and tying transactions. Pulling and reviewing samples. Reconciling balance sheet items across periods. Working through an audit program line by line, chasing down every open item. These are the tasks that a first-year associate spends the majority of their time on.

The surface read is that this work is mechanical. The more accurate read is that it is structured exposure to professional judgment under real conditions.

Here is what that exposure was actually building:

Pattern recognition across large data sets.

Reviewing thousands of transactions trains an auditor to notice when something is slightly off. An unusual counterparty, a timing anomaly, a size inconsistent with the account history. That instinct is not taught in a classroom. It is accumulated through repetition.

Calibrated scepticism.

You develop the ability to sense when a number looks wrong by first being wrong about it, then finding out why. The feedback loop of spotting a discrepancy, investigating it, and either resolving it or escalating it is how junior auditors learn what warrants attention and what does not.

Financial statement literacy.

Working through the detail of a set of accounts. Not reviewing them, but reconciling them, line by line. This builds a structural understanding of how financial statements fit together and where errors are most likely to hide.

Communication habits.

Asking a client for supporting documentation. Documenting a finding clearly enough for a manager to review without asking three follow-up questions. Explaining why a number cannot be signed off yet. These are communication skills, and they were being built through daily practice.

Tolerance for ambiguity and methodical problem-solving.

Audit fieldwork rarely produces clean answers. Learning to work through an ambiguous position - methodically, under time pressure, with a manager waiting for the answer - is a professional competency in its own right.

None of this was formally taught. It was absorbed through months of supervised repetition with real consequences. The analogy to a medical resident is imperfect but instructive: the learning was in the doing, and removing the doing removes the learning mechanism, not just the task.

The AI Skills Gap Junior Auditors Now Face. What the Data Shows.

The AI skills gap junior auditors are now facing has two distinct dimensions, and conflating them produces the wrong response.

The first is the AI literacy gap. Most junior auditors entering the workforce have not been trained to interpret AI-generated outputs in an audit context. They have not been taught to identify where AI tools flag correctly, where they miss, or what the underlying data inputs mean for the reliability of the output. They are expected to use tools they do not fully understand - which is precisely the condition that professional scepticism is supposed to prevent.

The second is the foundational competency gap. A 20–30% reduction in fieldwork time means proportionally less supervised exposure for trainees in their first two years. The volume of transactions reviewed, the number of samples pulled, the hours spent working through audit programs - all of it is compressed. The feedback loops that build judgment are thinner and less frequent.

Together, these gaps describe a specific kind of capability risk: auditors who are qualified by examination but not yet calibrated by experience, working in an environment that now requires them to exercise more judgment, earlier, with less of the exposure that used to prepare them for it.

Firms have adopted AI tools faster than they have adapted their early career auditor development model. The efficiency gain has been captured. The development cost has not yet been fully accounted for.

The solution starts with AI literacy training. Not advanced data science, but applied critical thinking for professionals who use AI every day and need to know when to trust it and when not to.

Why Judgment Doesn't Develop Automatically

There is an assumption worth stating clearly before dismantling it: many firms believe that reduced fieldwork time will be offset by higher-quality work conversations, better supervision, or more time for meaningful engagement. The theory is that juniors will learn more by doing less but better.

This assumption underestimates what judgment actually requires.

Professional judgment is not innate, and it is not transmitted through conversation. It is built through specific, repeated experiences that provide feedback - the experience of being wrong, finding out why, adjusting, and being tested again. Supervision can guide this process. It cannot substitute for the exposure itself.

Calibrated scepticism - knowing when a number warrants a second look - develops because an auditor has looked at enough numbers to have a baseline. Financial statement intuition develops because an auditor has reconciled enough line items to understand how the pieces connect. Communication discipline develops because an auditor has drafted enough findings to learn what a manager will push back on.

When the volume of that exposure drops, the feedback loops become thinner. Auditors reach the point of carrying client conversations, reviewing junior work, and making judgment calls on complex items without having accumulated the same foundation that their predecessors had at the same career stage.

Senior readiness slips. The review burden on managers increases. Client-facing confidence is lower than the role requires. These are the downstream consequences of removing the scaffold without replacing it.

The structure is still expected to stand.

The Four Skills That Must Now Be Built Deliberately

There is a defined, finite set of practical skills that can close this gap. They are teachable and measurable. Firms and trainees have the same list. The difference is who takes responsibility for building them.

1. AI Literacy. Using the Tool Without Trusting It Blindly

AI literacy training for auditors is not about learning to build models or write code. It is about understanding what the tools you are already using can and cannot do in an audit context: where they flag correctly, where they miss, and why the underlying data inputs matter for the reliability of the output.

Practically, this means: interpreting AI-generated findings with professional scepticism, identifying the conditions under which an AI tool is likely to produce a false positive or miss a material item, and knowing how to document your reasoning when the output informed - but did not make - the judgment call.

2. Data Analysis. Reading What the Numbers Are Actually Saying

The pattern recognition that fieldwork used to build must now be taught directly. Data analysis training for audit trainees means learning to work with large structured data sets, identify anomalies, visualise distributions, and draw defensible conclusions from what the data shows.

This is the practical replacement for the instincts that transaction testing used to develop. The tools - Excel for data manipulation, Power BI for visualisation, basic statistical literacy - are accessible. What is missing is structured instruction in how to apply data skills for auditors in a real audit context. Those skills are no longer optional; they are the new baseline.

3. Financial Modelling and Excel Fluency

Reduced time in the detail of financial statements means reduced familiarity with how numbers connect. Financial modelling and Excel training rebuilds this from the ground up - not because junior auditors need to build LBO models, but because auditors who can model independently understand financial statements differently and better than those who cannot.

Excel fluency is the foundation. The ability to trace a number through a model, build a reconciliation from scratch, or construct a simple sensitivity analysis is the kind of competency that fieldwork exposure used to develop passively. It now needs to be built actively.

4. Communication and Professional Judgment in Writing

Fieldwork also trained communication - writing findings, documenting conclusions, explaining discrepancies to clients clearly enough that the explanation would hold up in a review. Less fieldwork means less practice.

Presentation and communication skills must be taught directly: structured writing for a professional context, presenting findings clearly to managers and clients, and data storytelling - the ability to take a set of numbers and explain what they mean to someone who was not in the room when you found them.

For Audit Trainees: What You Should Do Before Your Firm Does It For You

If you are an early-career auditor in your first two years, here is the honest version of the situation: your firm will probably address this. But "probably" and "eventually" are not the same as "now," and the gap between where you are and where your role expects you to be is accumulating in the meantime.

Most firms are 12 to 18 months behind the tools they have already deployed. That is not a criticism, it is the normal lag between adoption and adaptation. But it means that if you wait for structured training to arrive, you are waiting through the period when it would have the most impact on what skills junior auditors need for AI, and for everything the role demands around it.

The specific risk is this: if you reach your first senior review without the data instincts and AI literacy that your role now demands, that gap is harder to close retroactively than it would have been to build from the start. The colleagues who built those skills early will not be more qualified than you, but they will be more prepared, and that difference is visible in performance reviews, client interactions, and manager confidence.

The sequence matters. Start with AI literacy. Understand the tools you are already using before you extend your reliance on them. Then build data analysis skills - the pattern recognition that fieldwork used to develop, rebuilt deliberately. Then communication, because the expectation to present findings clearly to managers and clients does not wait until you feel ready.

Proactive training is also a credibility signal. In a cohort where most people are waiting, the ones who are not are noticeable.

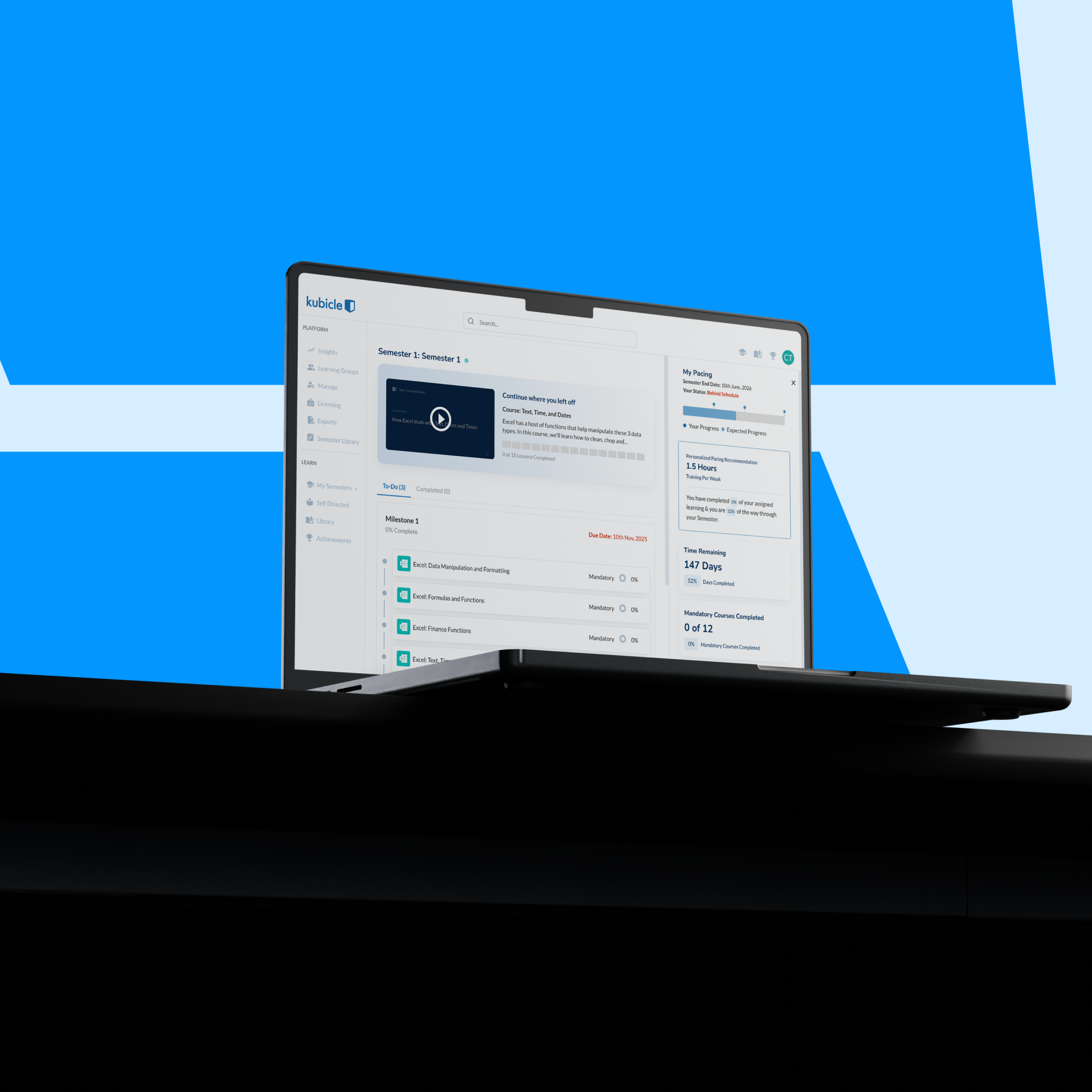

One practical note: if you have ongoing CPE/CPD requirements as part of your qualification, structured training through Kubicle carries CPE/CPD credits. That means the time you spend closing this gap also contributes to your professional development requirements - not competing with them.

If you are in your first two years in audit, the skills that set you apart are not the ones your exams tested. See what early-career auditors are building — and what you can apply from day one. Start with AI literacy training.

For Audit Firms: The Graduate Development Model Has a Structural Gap

Your Big 4 graduate training model, and the equivalent at every mid-market firm, was designed for a world where the work itself was the training. That world has changed faster than the model.

The downstream consequences are specific. Slower senior readiness, as cohorts arrive at the point of carrying client relationships with less accumulated exposure. Weaker communication from junior staff in client-facing situations, because the practice that built it has been compressed. A heavier review burden on managers, compensating for judgment that has not had time to develop. And a widening gap between what the role now demands of junior auditors and what the training model has prepared them to deliver.

The most common objection to this framing is: "We provide technical training and on-the-job coaching - isn't that sufficient?"

It is not, for a specific reason. Technical training covers qualification content. It does not cover applied data skills, AI literacy training for auditors, or the practical communication competencies that fieldwork used to develop. On-the-job coaching is valuable, but it requires the job to provide the learning opportunity - and the job looks different now. Coaching a junior through an AI-assisted document review is not the same developmental experience as having them work through hundreds of documents manually.

The solution is not a multi-year curriculum redesign. It is a defined audit training program targeting the specific gaps (AI literacy, data analysis, communication) deployed to your graduate intake cohort, with measurable outcomes within 90 days.

Start with your current graduate cohort. Pick one skill area. Measure the difference at the 90-day mark. One cohort, one skill, one measurable outcome.

Start with your next graduate intake. One training area. Measurable outcomes in 90 days. See how audit teams approach structured early career auditor development — and what your graduates will be able to do that they cannot do today.

What a Structured Audit Training Program Actually Looks Like in Practice

The training that closes this gap is not theoretical, and it does not require disrupting audit cycles to deliver.

For a junior auditor in year one or two, the sequence is straightforward:

AI literacy first. Understand the tools, apply professional scepticism to automated outputs, interpret AI-generated findings in the context of the audit objective. This is not advanced, it is the applied critical thinking that the role now requires from day one.

Data analysis second. Work confidently with structured data sets, identify anomalies, visualise distributions, draw defensible conclusions. This is the direct replacement for the pattern recognition that transaction testing used to build.

Excel and financial modelling third. Build fluency with the core tool of the profession - not at a modelling level, but at the level of someone who understands how numbers connect and can trace them independently.

Communication fourth. Write findings clearly, present conclusions to managers and clients, explain what the data shows to someone who was not in the room when you found it.

This is not a replacement for technical qualification training. It is the practical layer that qualifications leave out and that fieldwork used to provide by default.

The results are measurable. Over 75% of Kubicle-trained professionals report stronger data analysis and communication skills after training. 45% apply their skills every day. More than 60% save between 30 minutes and 2 hours per week. These are the outcomes that structured early career auditor development programs are measured against, and the outcomes that a focused audit training program, properly deployed, can deliver.

The AI Skills Gap Junior Auditors Face Is Solvable — But Not By Accident

The cause-and-effect chain is clear. AI removed the repetitive work. The repetitive work built judgment. Judgment does not develop automatically without a replacement mechanism.

Firms that do not replace the scaffold will produce technically credentialled but experientially thin auditors, and will carry the cost of that gap in manager burden, slower senior readiness, and client-facing performance. Trainees who wait for their firm to act will be 12 to 18 months behind the colleagues who did not.

The AI skills gap junior auditors face is real. It is structural. And it is not the kind of problem that resolves itself through better supervision or more time.

The training to close it exists. The only question is who moves first.

.png)